Batch download files from a list of URLs into your own server and ZIP it for a easier download to your computer.

batch-download.php

Download many links from a website easily. Did you ever want to download a bunch of PDFs, podcasts, or other files from a website and not right-click-'Save-as' every single one of them? Batch Link Downloader solves this problem for you! Batch Link Downloader is a DownThemAll! Alternative for Chrome. This is the first release. Oct 29, 2019 Download Batch URL Downloader - Provide a list of URLs and download a large number of files in one quick operation, with this very basic but easy-to-use program. Jan 24, 2019 Provide a list of URLs and download a large number of files in one quick operation, with this very basic but easy-to-use program What's new in Batch URL Downloader 1.7: Download. Jul 21, 2017 Downloading a List of URLs Automatically I recently needed to download a bunch of files from Amazon S3, but I didn't have direct access to the bucket — I only had a list of URLs. There were too many to fetch one by one, so I wanted to fetch them automatically.

Dec 16, 2013 Batch download files from a list of URLs into your own server and ZIP it for a easier download to your computer. batch-download.php. Skip to content. All gists Back to GitHub. Sign in Sign up Instantly share code, notes, and snippets. Yosko / batch-download.php. Created Dec 16, 2013. Jul 25, 2019 URL Extractor 4.7 - Batch process files and extract URLs. Download the latest versions of the best Mac apps at safe and trusted MacUpdate.

| <!doctype html> |

| <!-- |

| PHP Batch Download Script |

| @author Yosko <[email protected]> |

| @copyright none: free and opensource |

| @link http://www.yosko.net/article32/snippet-05-php-telechargement-de-fichiers-par-lots |

| --> |

| <htmllang='en-US'> |

| <head> |

| <metacharset='UTF-8'> |

| <title>Batch Downloader</title> |

| <style> |

| textarea { |

| width: 100%; |

| min-height: 10em; |

| } |

| </style> |

| </head> |

| <body> |

| <h1>Batch Downloader</h1> |

| <?php |

| //config |

| error_reporting(E_ALL | E_STRICT); |

| ini_set('display_errors','On'); |

| set_time_limit ( 180 ); //facultative: to avoid timeout |

| ob_flush(); flush(); |

| //recursive rmdir from php.net |

| functiondelTree($dir) { |

| $files = array_diff(scandir($dir), array('.','..')); |

| foreach ($filesas$file) { |

| (is_dir($dir.'/'.$file)) ? delTree($dir.'/'.$file) : unlink($dir.'/'.$file); |

| } |

| returnrmdir($dir); |

| } |

| $errors = array(); |

| $urlString = '; |

| //a list of URLs to download was given |

| if(isset($_POST['urls']) |

| && isset($_POST['directory']) |

| && strpos($_POST['directory'], '..') false |

| && strpos($_POST['directory'], '/') false |

| ) { |

| ?> |

| <ul> |

| <?php |

| $urls = explode(PHP_EOL, $_POST['urls']); |

| $directory = trim($_POST['directory']); |

| if(empty($directory)) { $directory = 'files'; } |

| $zipFile = $directory.'.zip'; |

| //temporary directory to put the files into |

| if(!file_exists($directory)) { |

| mkdir($directory); |

| } |

| //temporary archive file for download |

| if(file_exists($zipFile)) { |

| unlink($zipFile); |

| } |

| //try and download each file |

| foreach($urlsas$url) { |

| $url = trim($url); |

| $filename = basename($url); |

| $success = copy($url, $directory.DIRECTORY_SEPARATOR.$filename); |

| if($success) { |

| echo'<li>'.$filename.' : OK</li>'; |

| } else { |

| $errors[] = $url; |

| echo'<li>'.$filename.' : KO (source = <a href='.$url.'>'.$url.'</a>)</li>'; |

| } |

| ob_flush(); flush(); |

| } |

| //zip files |

| $zip = newZipArchive(); |

| if ($zip->open($zipFile, ZIPARCHIVE::CREATE) true) { |

| foreach (glob($directory.'/*') as$file) { |

| $zip->addFile($file); |

| } |

| $zip->close(); |

| } |

| //delete directory |

| delTree($directory); |

| ?> |

| </ul> |

| <p> |

| <?phpechocount($urls); ?> file(s), |

| <?phpechocount($urls) - count($errors); ?> successe(s), |

| <?phpechocount($errors); ?> error(s) |

| </p> |

| <p>Download <ahref='<?phpecho$zipFile; ?>'><?phpecho$zipFile; ?></a></p> |

| <p>Errors :</p> |

| <ul> |

| <?phpforeach($errorsas$error) { ?> |

| <li><?phpecho$errors; ?></li> |

| <?php } // end loop on errors ?> |

| </ul> |

| <?php } // end form post ?> |

| <formmethod='POST' target='> |

| <inputtype='text' name='directory' placeholder='Destination file...'>.zip |

| <textareaname='urls' placeholder='Put each URL on a different line'> |

| <?php |

| foreach($errorsas$error) { |

| echo$error.PHP_EOL; |

| } |

| ?></textarea> |

| <inputtype='submit' name='submitURLs' value='Download'> |

| </form> |

| </body> |

| </html> |

Sign up for freeto join this conversation on GitHub. Already have an account? Sign in to comment

Wednesday, December 04, 2019The latest version for this tutorial is available here. Go to have a check now!

Downloading images one by one can be tedious. Forget about using the old technique of 'right click and save image.' There is a smart way to do such a boring job for you. Octoparse can automate the process in a few seconds. You can easily download images for free from Instagram, Twitter, Amazon, Pinterest and more. Here are two easy steps that set your hands free.

Steps 1. Scrape images URLs from websites using Octoparse and export the extracted data into Excel. Check this post: How to Build an Image Crawler without Coding for step-by-step instructions.

Step 2. Choose a downloader and import extracted lists of image URLs to the downloader.

Tab Save

Type: Chrome Extension

Link: https://chrome.google.com/webstore/detail/tab-save/lkngoeaeclaebmpkgapchgjdbaekacki

Batch Download Files From List Of Urls For Mac 2016

Note: Simply paste in the URLs, and it will download the images one by one.

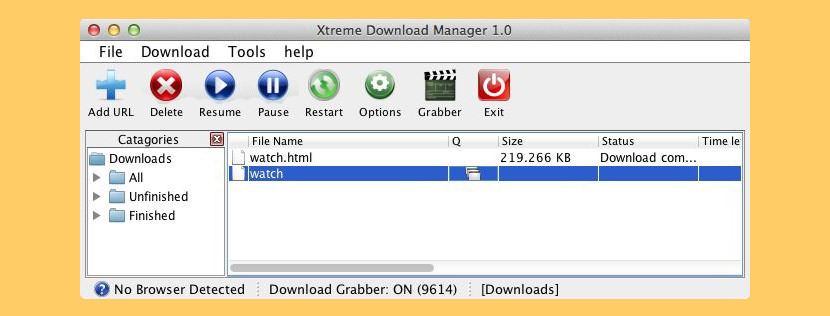

Free Download Manager

Type: Desktop software (support for both Windows and Mac OS)

Link: https://www.freedownloadmanager.org/download.htm

Note: It supports pasting in URLs from your clipboard to create batch downloads. Fast and efficient, especially handy for bulk downloads.

Author Picks:

1. Scrape product images from eBay

2. Scrape product image from Amazon

3. Select and extract data/URL/image/HTML